Stumbled upon while searching for a reference: Richard Tol Changes Tune, Talks Carbon Tax. From what I’ve read, Tol is too much of a nonconformist to club with the professional skeptics, and has probably always preferred a Hotelling-style carbon price trajectory, so I’m not convinced that this is really a change, but it’s intriguing.

Category: Aside

Waiter, there's a carpet in my coffee

From the ArXiv blog: researchers have discovered a new fractal, closely matching a Sierpinski Carpet, in the boundary layer dynamics of coffee in milk. I don’t know how Rayleigh-Taylor instabilities work, but I do find occasional cool things in my coffee:

End of World Postponed

The LHC has been shut down before it had a chance to destroy the universe. It’ll be back up in a few months though, so as a precaution I’ve raised my discount rate to 1800%. Apparently the failure was caused by a magnet quench, which is a cool positive feedback.

Endogenous Energy Technology

I just created an annotated list of links on learning/experience curves, deliberate R&D, and other forms of endogenous energy technology, including a few models and empirical estimates. See del.icio.us/tomfid for details. Comments with more references will be greatly appreciated!

Backing Off on Ethanol

ST. PAUL (Reuters) –

U.S. Republicans called on Monday for an end to a controversial requirement that gasoline contain a set amount of ethanol, a policy backed by the Bush administration that critics say has helped drive up world food prices.

In their 2008 platform detailing policy positions, Republicans said markets — not government — should determine how much ethanol is blended into gasoline, and pushed for development of a cellulosic version, which could be made from grasses rather than corn.

It will be interesting to see what this implies for California’s LCFS design.

Update

Corn belt Republicans are not pleased.

Contrast the new platform with the situation in 2005.

McCain seems to have done a double-flip-flop, reversing his 2006 reversal of his 2000 campaign position: Continue reading “Backing Off on Ethanol”

Polar Bears and Lead Miners

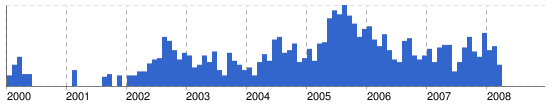

Google News, Trends, Insight

I’ve found trends on Google news interesting for some time. For example, did net news predict a housing bubble?

As online sources of such social data get richer, and control and normalization issues are solved or at least made transparent, they could become a useful input to behavioral models. Already, I find them to be a useful reality check, for seeing how long it takes for events to show up on popular radar, and whether things that seem big are really big in the public mind.

Drilling in America

I don’t usually have TV, but I’m in a hotel tonight. I just saw a McCain ad that would be funny if it weren’t serious. It starts with some blather about high gas prices, and a picture of an old pump (designed to trigger nostalgia for 25 cents a gallon?). It goes on to imply that domestic drilling is the oil security answer. Then it makes the really amazing assertion (“you know who’s to blame”) that the only thing standing in the way of domestic drilling is … Obama. Wow … I had no idea that one senator could single-handedly wield such power.

Climate Beliefs Follow Party Lines

From the latest National Journal‘s Congressional Insiders Poll. Perhaps this is another special case of the general phenomenon of elites being more politically polarized.

Effects of Global Change on the US

The Climate Change Science Program web site has the newly-released Scientific Assessment of the Effects of Global Change on the United States frontpage, along with a Revised Research Plan for the CCSP.