Climate skeptics seem to have a thing for contests and bets. For example, there’s Armstrong’s proposed bet, baiting Al Gore. Amusingly (for data nerds anyway), the bet, which pitted a null forecast against the taker’s chosen climate model, could have been beaten easily by either a low-order climate model or a less-naive null forecast. And, of course, it completely fails to understand that climate science is not about fitting a curve to the global temperature record.

Another instance of such foolishness recently came to my attention. It doesn’t have a name that I know of, but here’s the basic idea:

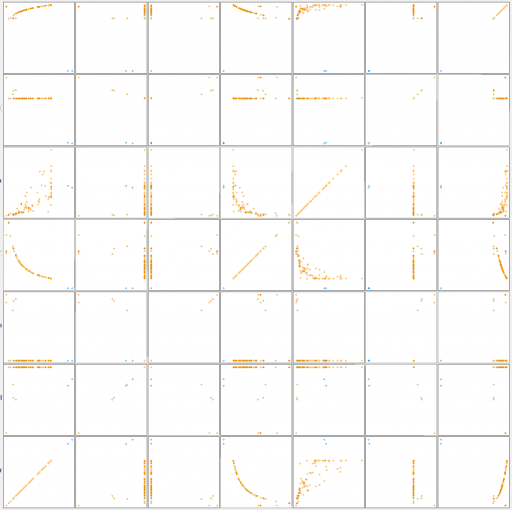

- The author generates 1000 time series:

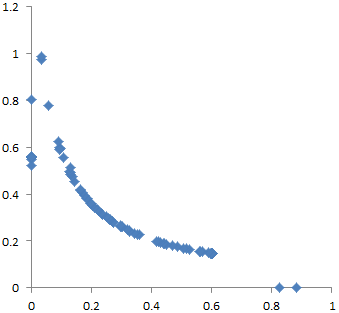

Each series has length 135: the same length as that of the most commonly studied series of global temperatures (which span 1880–2014). The 1000 series were generated as follows. First, 1000 random series were obtained (for more details, see below). Then, some of those series were randomly selected and had a trend added to them. Each added trend was either 1°C/century or −1°C/century. For comparison, a trend of 1°C/century is greater than the trend that is claimed for global temperatures.

- The challenger pays $10 for the privilege of attempting to detect which of the 1000 series are perturbed by a trend, winning $100,000 for correctly identifying 90% or more.

The best challenger managed to identify 860 series, so the prize went unclaimed. But only two challenges are described, so I have to wonder how many serious attempts were made. Had I known about the contest in advance, I would not have tried it. I know plenty about fitting dynamic models to data, though abstract statistical methods aren’t really my thing. But I still have to ask myself some questions:

- Is there really money to be made, or will the author simply abscond to the pub with my $10? For the sake of argument, let’s assume that the author really has $100k at stake.

- Is it even possible to win? The author did not reveal the process used to generate the series in advance. That alone makes this potentially a sucker bet. If you’re in control of the noise and structure of the process, it’s easy to generate series that are impossible to reliably disentangle. (Tellingly, the author later revealed the code to generate the series, but it appears there’s no code to successfully identify 90%!)

For me, the statistical properties of the contest make it an obvious non-starter. But does it have any redeeming social value? For example, is it an interesting puzzle that has something to do with actual science? Sadly, no.

The hidden assumption of the contest is that climate science is about estimating the trend of the global temperature time series. Yes, people do that. But it’s a tiny fraction of climate science, and it’s a diagnostic of models and data, not a real model in itself. Science in general is not about such things. It’s about getting a good model, not a good fit. In some places the author talks about real physics, but ultimately seems clueless about this – he’s content with unphysical models:

Moreover, the Contest model was never asserted to be realistic.

…

Are ARIMA models truly appropriate for climatic time series? I do not have an opinion. There seem to be no persuasive arguments for or against using ARIMA models. Rather, studying such models for climatic series seems to be a worthy area of research.

…

Liljegren’s argument against ARIMA is that ARIMA models have a certain property that the climate system does not have. Specifically, for ARIMA time series, the variance becomes arbitrarily large, over long enough time, whereas for the climate system, the variance does not become arbitrarily large. It is easy to understand why Liljegren’s argument fails.

It is a common aphorism in statistics that “all models are wrong”. In other words, when we consider any statistical model, we will find something wrong with the model. Thus, when considering a model, the question is not whether the model is wrong—because the model is certain to be wrong. Rather, the question is whether the model is useful, for a particular application. This is a fundamental issue that is commonly taught to undergraduates in statistics. Yet Liljegren ignores it.

As an illustration, consider a straight line (with noise) as a model of global temperatures. Such a line will become arbitrarily high, over long enough time: e.g. higher than the temperature at the center of the sun. Global temperatures, however, will not become arbitrarily high. Hence, the model is wrong. And so—by an argument essentially the same as Liljegren’s—we should not use a straight line as a model of temperatures.

In fact, a straight line is commonly used for temperatures, because everyone understands that it is to be used only over a finite time (e.g. a few centuries). Over a finite time, the line cannot become arbitrarily high; so, the argument against using a straight line fails. Similarly, over a finite time, the variance of an ARIMA time series cannot become arbitrarily large; so, Liljegren’s argument fails.

Actually, no one in climate science uses straight lines to predict future temperatures, because forcing is rising, and therefore warming will accelerate. But that’s a minor quibble, compared to the real problem here. If your model is:

global temperature = f( time )

you’ve just thrown away 99.999% of the information available for studying the climate. (Ironically, the author’s entire point is that annual global temperatures don’t contain a lot of information.)

No matter how fancy your ARIMA model is, it knows nothing about conservation laws, robustness in extreme conditions, dimensional consistency, or real physical processes like heat transfer. In other words, it fails every reality check a dynamic modeler would normally apply, except the weakest – fit to data. Even its fit to data is near-meaningless, because it ignores all other series (forcings, ocean heat, precipitation, etc.) and has nothing to say about replication of spatial and seasonal patterns. That’s why this contest has almost nothing to do with actual climate science.

This is also why data-driven machine learning approaches have a long way to go before they can handle general problems. It’s comparatively easy to learn to recognize the cats in a database of photos, because the data spans everything there is to know about the problem. That’s not true for systemic problems, where you need a web of data and structural information at multiple scales in order to understand the situation.