arXiv covers modeling on an epic scale in Europe’s Plan to Simulate the Entire Earth: a billion dollar plan to build a huge infrastructure for global multiagent models. The core is a massive exaflop “Living Earth Simulator” – essentially the socioeconomic version of the Earth Simulator.

I admire the audacity of this proposal, and there are many good ideas captured in one place:

- The goal is to take on emergent phenomena like financial crises (getting away from the paradigm of incremental optimization of stable systems).

- It embraces uncertainty and robustness through scenario analysis and Monte Carlo simulation.

- It mixes modeling with data mining and visualization.

- The general emphasis is on networks and multiagent simulations.

I have no doubt that there might be many interesting spinoffs from such a project. However, I suspect that the core goal of creating a realistic global model will be an epic failure, for three reasons.

- The first is technical. Global Climate Models and other physical simulations are nowhere near their potential realism on today’s petaflop computers – they’re still hungry. Adding socioeconomic constructs to such models would cause a combinatorial explosion that would overwhelm the increase in computing power. Even if you threw out the whole gridded biogeophysical system, the problem would reemerge immediately with the addition of the aspirational 10 billion agents. Quality control and understanding would then become a problem, because you’re not going to do any meaningful uncertainty analysis on a model that’s pushing computational limits for a single run.

- The second is practical. Current large parallel models are successful mainly in areas where there’s a sound foundation of physical principles, and a limited number of highly uncertain measurements or parameterized behaviors (like clouds in GCMs). The proposed territory has the opposite properties: a modest backbone of known phenomena, and a vast array of ill posed constructs, with little agreement about even the proper modeling paradigm. The most model-intensive discipline in the territory, economics, almost exclusively uses idealized agents and has little to say about complexity. Thus, even if the technical problems are solvable, the result would probably be like giving a machine gun to a monkey.

- Third, the fundamental theory of change is flawed, for reasons explained in John Sterman’s video clip. The big model approach essentially hopes that we will find the socioeconomic equivalent of the god particle, and that will help us to achieve environmental sustainability, financial stability, and world peace. Even if it did, it wouldn’t help, unless it was a kind of meta-God particle: the secret sauce that gets people to work together in their own long term enlightened self-interest. If there’s some hope of that, then this project needs a lot more implementation design, and a lot less computing hardware, because it’s not likely to be a solution that’s enforceable top-down by technocrats.

If there was ever a time to take Coyle’s worry to heart, that adding too many soft variables (or just too many variables) to a model would cloud understanding and fail a cost-benefit test, this is it.

In spite of the challenges, it’s crucial to explore this terrain. We have two kinds of problems in the world: physical and social. The physical problems – climate, persistent pollutants, resource depletion – are actually quite simple. There are uncertain measurements, but fundamentally they are bathtub problems, and we don’t need any more models to know how to manage a bathtub. There are multiple well-known solutions to getting a system to observe a stock constraint; the problem is that there isn’t enough understanding and will to implement the solution. That’s a social problem, much like our other persistent social problems: poverty, war, racism, etc. So, it’s the latter we really need insight into. I seriously doubt that we will get that insight from bigger models, just as the wave of bigger global models following World Dynamics and World3 didn’t yield any more insight, and now lies largely forgotten.

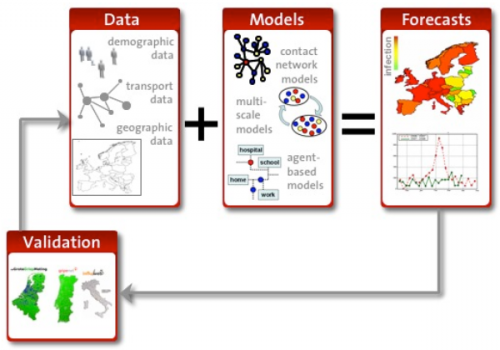

What to do instead? I see nothing wrong with most of the aims of this proposal, but the emphasis needs to shift away from Big Science hardware and models of everything. It’s fine to have some exascale computing, but the infrastructure for diffusing knowledge among practitioners is more important than the computing infrastructure, and the infrastructure for making data, output, structure and insight transparent to the public is more important still. The emphasis on big models needs to be preceded by an emphasis on good models. Sadly, a lot of the agent models I’ve seen are rubbish, because the population dynamics obscure the fact that individual agent behavior is nonsensical. If we’re going to build big models, we need much better ways of ensuring the quality and consistency of the components, not just the outer loop of forecast verification (in the graphic above). While we’re at it, forget about forecasting altogether … robust design is a better paradigm in this environment. At the least, interpret forecasting not as point prediction, but as a more general replication of phenomena. In any case, if successful forecasts of complex social system phenomena were possible, they wouldn’t come true – the quantum observer problem – unless perhaps the information were held closely by a modeling priesthood.

So, Europe, please dump a billion into modeling, but don’t promise or expect a miracle, and make sure that the tide of funding lifts all boats, raising broad modeling capabilities and the general level of public understanding, because that’s the way this might make a difference.

This is funny. I’m working on exactly the same thing, but as a work of conceptual/installation/performance art. That way my math doesn’t have to be so rigorous & it doesn’t have to be predictive. Or even useful at all. Getting around the computing power issue by using a fluid computer a la Phillips machine.

Model of everything = BIG FUN!