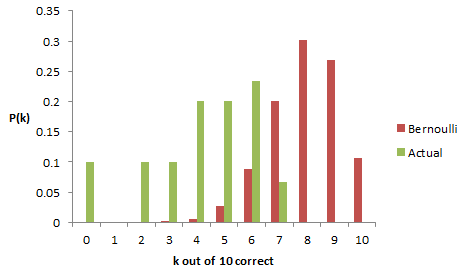

I ran my updated Capen quiz at the beginning of my Vensim mini-course on optimization and uncertainty at the System Dynamics conference. The results were pretty typical – people expressed confidence bounds that were too narrow compared to their actual knowledge of the questions. Thus their effective confidence was at the 40% level rather than the 80% level desired. Here’s the distribution of actual scores from about 30 people, compared to a Binomial (10,.8) distribution:

(I’m going from memory here on the actual distribution, because I forgot to grab the flipchart of results. Did anyone take a picture? I won’t trouble you with my confidence bounds on the the confidence bounds.)

My take on this is that it’s simply very hard to be well-calibrated intuitively, unless you dedicate time for explicit contemplation of uncertainty. But it is a learnable skill – my kids, who had taken the original Capen quiz, managed to score 7 out of 10.

Even if you can get calibrated on a set of independent questions, real-world problems where dimensions covary are really tough to handle intuitively. This is yet another example of why you need a model.

1 thought on “The Capen Quiz at the System Dynamics Conference”