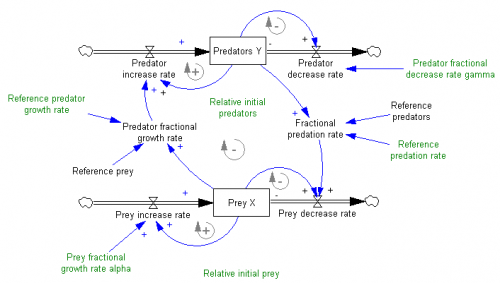

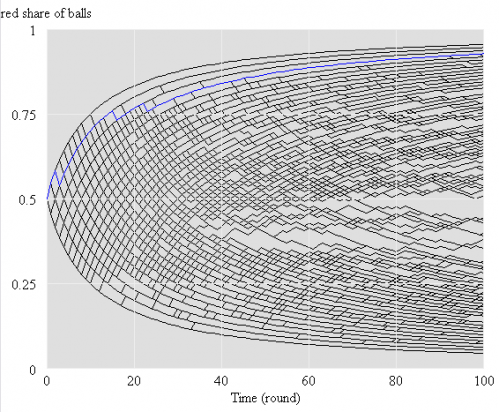

I just picked up a copy of Hartmut Bossel’s excellent System Zoo 1, which I’d seen years ago in German, but only recently discovered in English. This is the first of a series of books on modeling – it covers simple systems (integration, exponential growth and decay), logistic growth and variants, oscillations and chaos, and some interesting engineering systems (heat flow, gliders searching for thermals). These are high quality models, with units that balance, well-documented by the book. Every one I’ve tried runs in Vensim PLE so they’re great for teaching.

I haven’t had a chance to work my way through the System Zoo 2 (natural systems – climate, ecosystems, resources) and System Zoo 3 (economy, society, development), but I’m pretty confident that they’re equally interesting.

You can get the models for all three books, in English, from the Uni Kassel Center for Environmental Systems Research – it’s now easy to find a .zip archive of the zoo models for the whole series, in Vensim .mdl format, on CESR’s home page: www2.cesr.de/downloads.

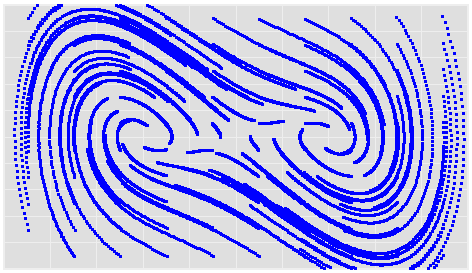

To tantalize you, here are some images of model output from Zoo 1. First, a phase map of a bistable oscillator, which was so interesting that I built one with my kids, using legos and neodymium magnets:

Continue reading “A System Zoo”

While times have changed, the dynamics described by these models are still widespread.

While times have changed, the dynamics described by these models are still widespread.