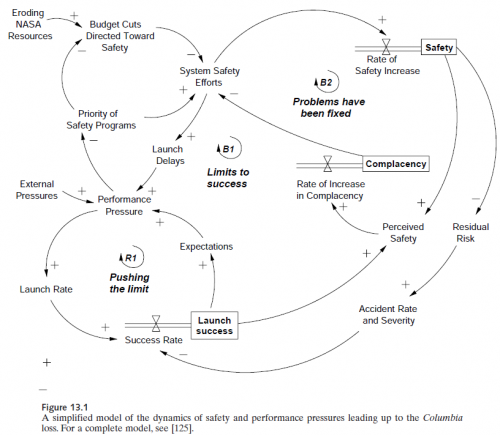

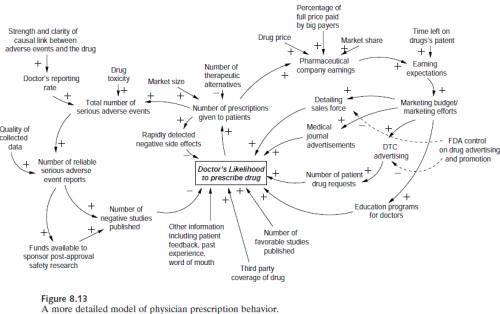

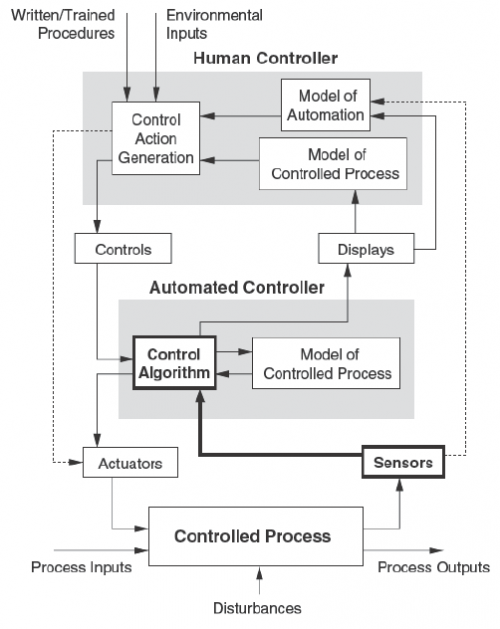

Accidents involve much more than the reliability of parts. Safety emerges from the systemic interactions of devices, people and organizations. Nancy Leveson’s Engineering a Safer World (free pdf currently at the MIT press link, lower left) picks up many of the threads in Perrow’s classic Normal Accidents, plus much more, and weaves them into a formal theory of systems safety. It comes to life with many interesting examples and prescriptions for best practice.

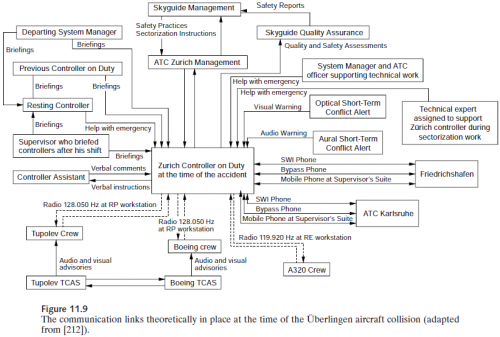

So far, I’ve only had time to read this the way I read the New Yorker (cartoons first), but a few pictures give a sense of the richness of systems perspectives that are brought to bear on the problems of safety:

The contrast between the figure above and the one that follows in the book, showing links that were actually in place, is striking. (I won’t spoil the surprise – you’ll have to go look for yourself.)