Needlessly provocative title notwithstanding, the dairy industry has to be one of the most spectacular illustrations of the battle for control of system leverage points. In yesterday’s NYT:

Domino’s Pizza was hurting early last year. Domestic sales had fallen, and a survey of big pizza chain customers left the company tied for the worst tasting pies.

Then help arrived from an organization called Dairy Management. It teamed up with Domino’s to develop a new line of pizzas with 40 percent more cheese, and proceeded to devise and pay for a $12 million marketing campaign.

Consumers devoured the cheesier pizza, and sales soared by double digits. “This partnership is clearly working,” Brandon Solano, the Domino’s vice president for brand innovation, said in a statement to The New York Times.

But as healthy as this pizza has been for Domino’s, one slice contains as much as two-thirds of a day’s maximum recommended amount of saturated fat, which has been linked to heart disease and is high in calories.

And Dairy Management, which has made cheese its cause, is not a private business consultant. It is a marketing creation of the United States Department of Agriculture — the same agency at the center of a federal anti-obesity drive that discourages over-consumption of some of the very foods Dairy Management is vigorously promoting.

Urged on by government warnings about saturated fat, Americans have been moving toward low-fat milk for decades, leaving a surplus of whole milk and milk fat. Yet the government, through Dairy Management, is engaged in an effort to find ways to get dairy back into Americans’ diets, primarily through cheese.

Now recall Donella Meadows’ list of system leverage points:

Leverage points to intervene in a system (in increasing order of effectiveness)

12. Constants, parameters, numbers (such as subsidies, taxes, standards)

11. The size of buffers and other stabilizing stocks, relative to their flows

10. The structure of material stocks and flows (such as transport network, population age structures)

9. The length of delays, relative to the rate of system changes

8. The strength of negative feedback loops, relative to the effect they are trying to correct against

7. The gain around driving positive feedback loops

6. The structure of information flow (who does and does not have access to what kinds of information)

5. The rules of the system (such as incentives, punishment, constraints)

4. The power to add, change, evolve, or self-organize system structure

3. The goal of the system

2. The mindset or paradigm that the system – its goals, structure, rules, delays, parameters – arises out of

1. The power to transcend paradigms

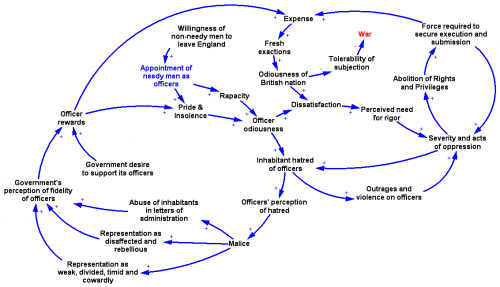

The dairy industry has become a master at exercising these points, in particular using #4 and #5 to influence #6, resulting in interesting conflicts about #3.

Specifically, Dairy Management is funded by a “checkoff” (effectively a tax) on dairy output. That money basically goes to marketing of dairy products. A fair amount of that is done in stealth mode, through programs and information that appear to be generic nutrition advice, but happen to be funded by the NDC, CNFI, or other arms of Dairy Management. For example, there’s http://www.nutritionexplorations.org/ – for kids, they serve up pizza:

That slice of “combination food” doesn’t look very nutritious to me, especially if it’s from the new Dominos line DM helped create. Notice that it’s cheese pizza, devoid of toppings. And what’s the gratuitous bowl of mac & cheese doing there? Elsewhere, their graphics reweight the food pyramid (already a grotesque product of lobbying science), to give all components equal visual weight. This systematic slanting of nutrition information is a nice example of my first deadly sin of complex system management.

A conspicuous target of dubious dairy information is school nutrition programs. Consider this, from GotMilk:

Flavored milk contributes only small amounts of added sugars to children ‘s diets. Sodas and fruit drinks are the number one source of added sugars in the diets of U.S. children and adolescents, while flavored milk provides only a small fraction (< 2%) of the total added sugars consumed.

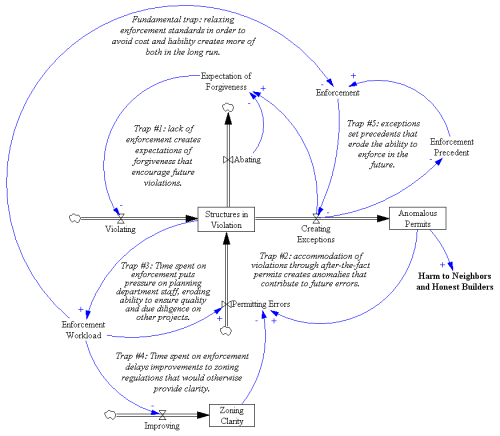

It’s tough to fact-check this, because the citation doesn’t match the journal. But it seems likely that the statement that flavored milk provides only a small fraction of sugars is a red herring, i.e. that it arises because flavored milk is a small share of intake, rather than because the marginal contribution of sugar per unit flavored milk is small. Much of the rest of the information provided is a similar riot of conflated correlation and causation and dairy-sponsored research. I have to wonder whether innovations like flavored milk are helpful, because they displace sugary soda, or just one more trip around a big eroding goals loop that results in kids who won’t eat anything without sugar in it.

Elsewhere in the dairy system, there are price supports for producers at one end of the supply chain. At the consumer end, their are price ceilings, meant to preserve the affordability of dairy products. It’s unclear what this bizarre system of incentives at cross-purposes really delivers, other than confusion.

The fundamental problem, I think, is that there’s no transparency: no immediate feedback from eating patterns to health outcomes, and little visibility of the convoluted system of rules and subsidies. That leaves marketers and politicians free to push whatever they want.

So, how to close the loop? Unfortunately, many eaters appear to be uninterested in closing the loop themselves by actively seeking unbiased information, or even actively resist information contrary to their current patterns as the product of some kind of conspiracy. That leaves only natural selection to close the loop. Not wanting to experience that personally, I implemented my own negative feedback loop. I bought a cholesterol meter and modified my diet until I routinely tested OK. Sadly, that meant no more dairy.