Read all about it at Climate Interactive.

NUMMI – an innovation killed by its host's immune system?

This American Life had a great show on the NUMMI car plant, a remarkable joint venture between Toyota and GM. It sheds light on many of the reasons for the decline of GM and the American labor movement. More generally, it’s a story of a successful innovation that failed to spread, due to policy resistance, inability to confront worse-before-better behavior and other dynamics.

I noticed elements of a lot of system dynamics work in manufacturing. Here’s a brief reading list:

- Workers fear improving themselves out of a job

- TQM improvements can undercut themselves when they interact with existing organizational systems

- Firefighting is a trap that keeps lines running in a low-productivity state

- Engineering and production don’t get along (I think there’s more on this in Daniel Kim’s thesis)

- Systems have trouble making far-sighted decisions when confronted with worse-before-better behavior (but there might be good reasons)

Montana's climate future

A selection of data and projections on past and future climate in Montana:

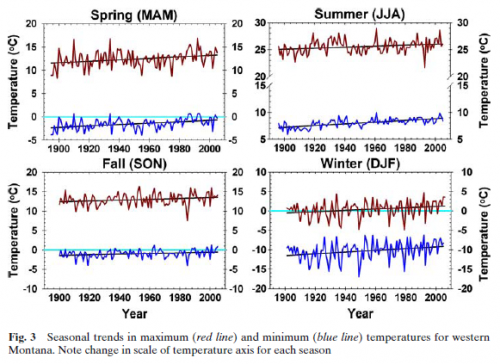

Pederson et al. (2010) A century of climate and ecosystem change in Western Montana: what do temperature trends portend? Climatic Change 98:133-154. It’s hard to read precisely off the graph, but there have been significant increases in maximum and minimum temperatures, with the greatest increases in the minimums and in winter – exactly what you’d expect from a change in radiative properties. As a result the daily temperature range has shrunk slightly and there are fewer below freezing and below zero days. That last metric is critical, because it’s the severe cold that controls many forest pests. There’s much more on this in a poster.

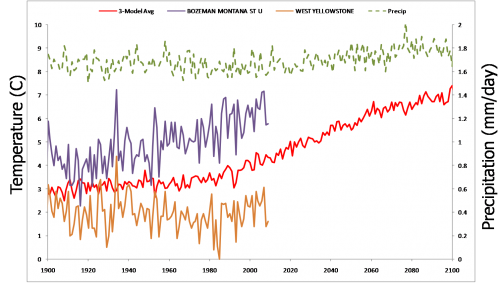

Not every station shows a trend – the figure above contrasts Bozeman (purple, strong trend) with West Yellowstone (orange, flat). The Bozeman trend is probably not an urban heat island effect – surfacestations.org thinks it’s a good site, and White Sulphur (a nice sleepy town up the road a piece) is about the same. The red line is an ensemble of simulations (GISS, CCSM & ECHAM5) from climexp.knmi.nl, projected into the future with A1B forcings (i.e., a fairly high emissions trajectory). I interpolated the data to latitude 47.6, longitude -110.9 (roughly my house, near Bozeman). Simulated temperature rises about 4C, while precipitation (green) is almost unmoved. If that came true, Montana’s future climate might be a lot like current central Utah.

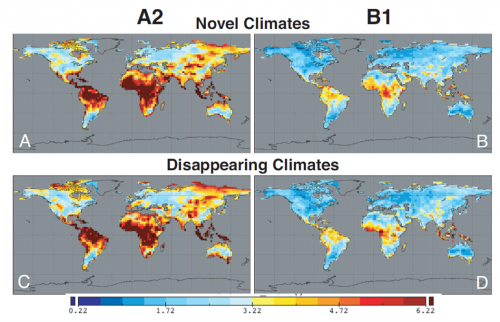

The figure above – from John W. Williams, Stephen T. Jackson, and John E. Kutzbach. Projected distributions of novel and disappearing climates by 2100 AD. PNAS, vol. 104 no. 14 – shows global grid points that have no neighbors within 500km that now have a climate like what the future might bring. In panel C (disappearing climates with the high emissions A2 scenario), there’s a hotspot right over Montana. Presumably that’s loss of today’s high altitude ecosystems. As it warms up, climate zones move uphill, but at the top of mountains there’s nowhere to go. That’s why pikas may be in trouble.

Sea Level Roundup

Realclimate has Martin Vermeer’s reflections on the making of his recent sea level paper with Stefan Rahmstorf. At some point I hope to post a replication of that study, in a model with the Grinsted and Rahmstorf 2007 structures, but I haven’t managed to replicate it yet. The problem may be that I haven’t yet tackled the reservoir storage issue.

At Nature Reports, Olive Heffernan introduces several sea level articles. Rahmstorf contrasts the recent set of semi-empirical models, predicting sea level of a meter or more this century, with the AR4 finding. Lowe and Gregory wonder if the semi-empirical models are really seeing enough of the dynamic ice signal to have predictive power, and worry about overadaptation to high scenarios. Mark Schrope reports on underadaptation – vulnerable developments in Florida. Mason Inman reports on ecological engineering, a softer approach to coastal defense.

Who eats the risk?

From the Asilomar geoengineering conference, via WorldChanging:

Lesson two: Nobody has any clear idea how to resolve the inequalities inherent in geoengineering. One of the most quoted remarks at the conference came from Pablo Suarez, the associate director of programs with the Red Cross/Red Crescent Climate Centre, who asked during one plenary session, “Who eats the risk?” In Suarez’s view, geoengineering is all about shifting the risk of global warming from rich nations — i.e., those who can afford the technologies to manipulate the climate — to poor nations. Suarez admitted that one way to resolve this might be for rich nations to pay poor nations for the damage caused by, say, shifting precipitation patterns. But that conjured up visions of Bangladeshi farmers suing Chinese geoengineers for ruining their rice crop — a legalistic can of worms that nobody was willing to openly explore.

If geoengineering is a for-profit operation, it presumably also involves the public bearing the risk of private acts, because investors aren’t likely to have an appetite for the essentially unlimited liability.

US manufacturing … are you high?

The BBC today carries the headline, “US manufacturing output hits 6 year high.” That sounded like an April Fool’s joke. Sure enough, FRED shows manufacturing output 15% below its 2007 peak at the end of last year, a gap that would be almost impossible to make up in a quarter. The problem is that the ISM-PMI index reported by the BBC is a measure of growth, not absolute level. The BBC has confused the stock (output) with the flow (output growth). In reality, things are improving, but there’s still quite a bit of ground to cover to recover the peak.

One child at the crossroads

China’s one child policy is at its 30th birthday. Inside-Out China has a quick post on the debate over the future of the policy. That caught my interest, because I’ve seen recent headlines calling for an increase in China’s population growth to facilitate dealing with an aging population – a potentially disastrous policy that nevertheless has adherents in many countries, including the US.

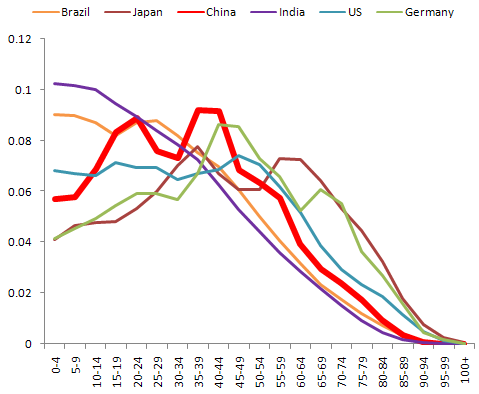

Here are the age structures of some major countries, young and old:

Vertical axis indicates the fraction of the population that resides in each age category.

Germany and Japan have the pig-in-the-python shape that results from falling birthrates. The US has a flatter age structure, presumably due to a combination of births and immigration. Brazil and India have very young populations, with the mode at the left hand side. Given the delay between birth and fertility, that builds in a lot of future growth.

Compared to Germany and Japan, China hardly seems to be on the verge of an aging crisis. In any case, given the bathtub delay between birth and maturity, a baby boom wouldn’t improve the dependency ratio for almost two decades.

More importantly, growth is not a sustainable strategy for coping with aging. At the same time that growth augments labor, it dilutes the resource base and capital available per capita. If you believe that people are the ultimate resource, i.e. that increasing returns to human capital will create offsetting technical opportunities, that might work. I rather doubt that’s a viable strategy though; human capital is more than just warm bodies (of which there’s no shortage); it’s educated and productive bodies – which are harder to get. More likely, a growth strategy just accelerates the arrival of resource constraints. In any case, the population growth play is not robust to uncertainty about future returns to human capital – if there are bumps on the technical road, it’s disastrous.

To say that population growth is a bad strategy for China is not necessarily to say that the one child policy should stay. If its enforcement is spotty, perhaps lifting it would be a good thing. Focusing on incentives and values that internalize population tradeoffs might lead to a better long term outcome than top-down control.

Pink Noise

In a continuous time dynamic model, representing noise as a random draw at every time step can be problematic. As the time step is decreased, the high frequency power of the noise spectrum increases accordingly, potentially changing the behavior. In the limit of small time steps, the resulting white noise has infinite power, which is not physically realistic.

The solution is to use pink noise, which is essentially white noise filtered to cut off high frequencies. SD models from the bad old days typically employed a pink noise generating structure that employed uniformly distributed white noise, relying on the central limit theorem to yield a normally distributed output. Ed Anderson improved that structure to incorporate a normally distributed input, which works better, especially if the cutoff frequency is close to the inverse of the time step.

Two versions of the model are attached: one for advanced versions of Vensim, which permit implementation as a :MACRO:, for efficient reuse. The other works with Vensim PLE.

PinkNoise2010.mdl PinkNoise2010.vmf PinkNoise2010.vpm

PinkNoise2010-PLE.vmf PinkNoise2010-PLE.vpm

Contributed by Ed Anderson, updated by Tom Fiddaman

Notes (also in the model files):

Description: The pink noise molecule described generates a simple random series with autocorrelation. This is useful in representing time series, like rainfall from day to day, in which today’s value has some correlation with what happened yesterday. This particular formulation will also have properties such as standard deviation and mean that are insensitive both to the time step and the correlation (smoothing) time. Finally, the output as a whole and the difference in values between any two days is guaranteed to be Gaussian (normal) in distribution.

Behavior: Pink noise series will have both a historical and a random component during each period. The relative “trend-to-noise” ratio is controlled by the length of the correlation time. As the correlation time approaches zero, the pink noise output will become more independent of its historical value and more “noisy.” On the other hand, as the correlation time approaches infinity, the pink noise output will approximate a continuous time random walk or Brownian motion. Displayed above are two time series with correlation times of 1 and 8 months. While both series have approximately the same standard deviation, the 1-month correlation time series is less smooth from period to period than the 8-month series, which is characterized by “sustained” swings in a given direction. Note that this behavior will be independent of the time-step. The “pink” in pink noise refers to the power spectrum of the output. A time series in which each period’s observation is independent of the past is characterized by a flat or “white” power spectrum. Smoothing a time series attenuates the higher or “bluer” frequencies of the power spectrum, leaving the lower or “redder” frequencies relatively stronger in the output.

Caveats: This assumes the use of Euler integration with a time step of no more than 1/4 of the correlation time. Very long correlation times should be avoided also as the multiplication in the scaled white noise will become progressively less accurate.

Technical Notes: This particular form of pink noise is superior to that of Britting presented in Richardson and Pugh (1981) because the Gaussian (Normal) distribution of the output does not depend on the Central Limit Theorem. (Dynamo did not have a Gaussian random number generator and hence R&P had to invoke the CLM to get a normal distribution.) Rather, this molecule’s normal output is a result of the observations being a sum of Gaussian draws. Hence, the series over short intervals should better approximate normality than the macro in R&P.

MEAN: This is the desired mean for the pink noise.

STD DEVIATION: This is the desired standard deviation for the pink noise.

CORRELATION TIME: This is the smooth time for the noise, or for the more technically minded this is the inverse of the filter’s cut-off frequency in radians.

Painting ourselves into a green corner

At the Green California Summit & Expo this week, I saw a strange sight: a group of greentech manufacturers hanging out in the halls, griping about environmental regulations. Their point? That a surfeit of command-and-control measures makes compliance such a lengthy and costly process that it’s hard to bring innovations to market. That’s a nice self-defeating outcome!

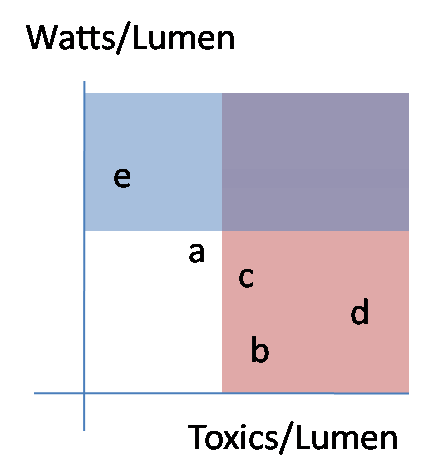

Consider this situation:

I was thinking of lighting, but it could be anything. Letters a-e represent technologies with different properties. The red area is banned as too toxic. The blue area is banned as too inefficient. That leaves only technology a. Maybe that’s OK, but what if a is made in Cuba, or emits harmful radiation, or doesn’t work in cold weather? That’s how regulations get really complicated and laden with exceptions. Also, if we revise our understanding of toxics, how should we update this to reflect the tradeoffs between toxics in the bulb and toxics from power generation, or using less toxic material per bulb vs. using fewer bulbs? Notice that the only feasible option here – a – is not even on the efficient frontier; a mix of e and b could provide the same light with slightly less power and toxics.

Proliferation of standards creates a situation with high compliance costs, both for manufacturers and the bureaucracy that has to administer them. That discourages small startups, leaving the market for large firms, which in turn creates the temptation for the incumbents to influence the regulations in self-serving ways. There are also big coverage issues: standards have to be defined clearly, which usually means that there are fringe applications that escape regulation. Refrigerators get covered by Energy Star, but undercounter icemakers and other cold energy hogs don’t. Even when the standards work, lack of a price signal means that some of their gains get eaten up by rebound effects. When technology moves on, today’s seemingly sensible standard becomes part of tomorrow’s “dumb laws” chain email.

The solution is obviously not total laissez faire; then the environmental goals just don’t get met. There probably are some things that are most efficient to ban outright (but not the bulb), but for most things it would be better to impose upstream prices on the problems – mercury, bisphenol A, carbon, or whatever – and let the market sort it out. Then providers can make tradeoffs the way they usually do – which package of options makes the cheapest product? -without a bunch of compliance risk involved in bringing their product to market.

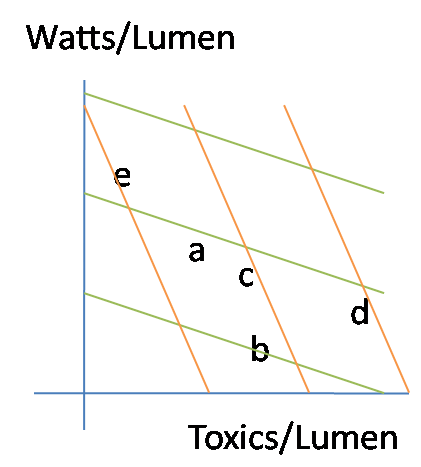

Here’s the alternative scheme:

The green and orange lines represent isocost curves for two different sets of energy and toxic prices. If the unit prices of a-e were otherwise the same, you’d choose b with the green pricing scheme (cheap toxics, expensive energy) and e in the opposite circumstance (orange). If some of the technologies are uniquely valuable in some situations, pricing also permits that tradeoff – perhaps c is not especially efficient or clean, but has important medical applications.

With a system driven by prices and values, we could have very simple conversations about adaptive environmental control. Are NOx levels acceptable? If not, raise the price of emitting NOx until it is. End of discussion.

Two related tidbits:

Fed green buildings guru Kevin Kampschroer gave an interesting talk on the GSA’s greening efforts. He expressed hope that we could move from LEED (checklists) to LEEP (performance-based ratings).

I heard from a lighting manufacturer that the cost of making a CFL is under a buck, but running a recycling program (for mercury recapture) costs $1.50/bulb. There must be a lot of markup in the distribution channels to get them up to retail prices.

State of the global deal, Feb 2010

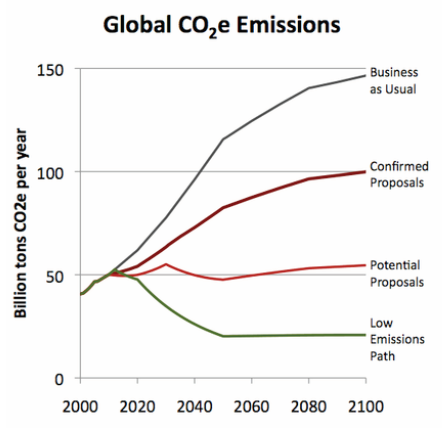

Somehow I forgot to mention our latest release:

The “Confirmed Proposals” emissions above translate into temperature rise of 3.9C (7F) in 2100. More details on the CI blog. The widget still stands where we left it in Copenhagen: