NPR has a nice article on self-regulation in the textbook industry. It turns out that textbook prices are up almost 100% from 2002, yet student spending on texts is nearly flat. (See the article for concise data.)

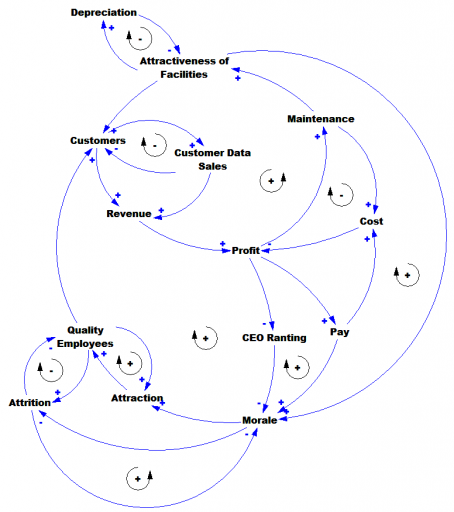

Here’s part of the structure that explains the data:

Starting with a price increase, students have a lot of options: they can manage textbooks more intensively (e.g., sharing, brown), they can simply choose to use fewer (substitution, blue), they can adopt alternatives that emerge after a delay (red), and they can extend the life of a given text by being quick to sell them back, or an agent can do that on their behalf by creating a rental fleet (green).

All of these options help students to hold spending to a desired level, but they have the unintended effect of triggering a variant of the utility death spiral. As unit sales (purchasing) fall, the unit cost of producing textbooks rises, due to the high fixed costs of developing and publishing the materials. That drives up prices, promping further reductions in purchasing – a vicious cycle.

This isn’t quite the whole story – there’s more to the supply side to think about. If publishers are facing a margin squeeze from rising costs, are they offering fewer titles, for example? I leave that as an exercise.

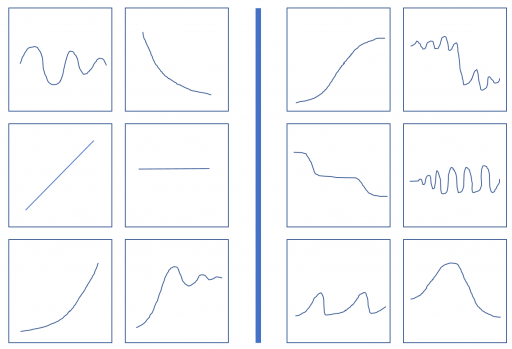

The six on the left conform to a pattern or rule, and your task is to discover it. As an aid, the six boxes on the right do not conform to the same pattern. They might conform to a different pattern, or simply reflect the negation of the rule on the left. It’s possible that more than one rule discriminates between the sets, but the one that I have in mind is not strictly visual (that’s a hint).

The six on the left conform to a pattern or rule, and your task is to discover it. As an aid, the six boxes on the right do not conform to the same pattern. They might conform to a different pattern, or simply reflect the negation of the rule on the left. It’s possible that more than one rule discriminates between the sets, but the one that I have in mind is not strictly visual (that’s a hint).