The pitfalls of pattern matching don’t just apply to intuitive comparisons of the behavior of associated stocks and flows. They also apply to statistics. This means, for example, that a linear regression like

stock = a + b*flow + c*time + error

is likely to go seriously wrong. That doesn’t stop such things from sneaking into the peer reviewed literature though. A more common quasi-statistical error is to take two things that might be related, measure their linear trends, and declare the relationship falsified if the trends don’t match. This bogus reasoning remains a popular pastime of climate skeptics, who ask, how could temperature go down during some period when emissions went up? (See this example.) This kind of naive naive statistical reasoning, with static mental models of dynamic phenomena, is hardly limited to climate skeptics though.

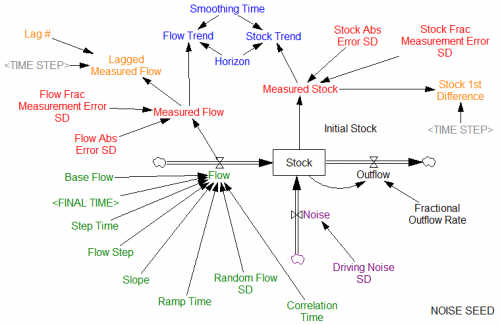

Given the dynamics, it’s actually quite easy to see how such things can occur. Here’s a more complete example of a realistic situation:

At the core, we have the same flow driving a stock. The flow is determined by a variety of test inputs , so we’re still not worrying about circular causality between the stock and flow. There is potentially feedback from the stock to an outflow, though this is not active by default. The stock is also subject to other random influences, with a standard deviation given by Driving Noise SD. We can’t necessarily observe the stock and flow directly; our observations are subject to measurement error. For purposes that will become evident momentarily, we might perform some simple manipulations of our measurements, like lagging and differencing. We can also measure trends of the stock and flow. Note that this still simplifies reality a bit, in that the flow measurement is instantaneous, rather than requiring its own integration process as physics demands. There are no complications like missing data or unequal measurement intervals.

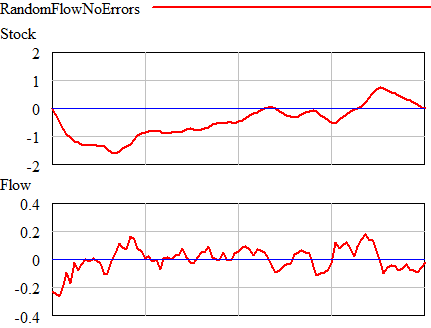

Now for an experiment. First, suppose that the flow is random (pink noise) and there are no measurement errors, driving noise, or outflows. In that case, you see this:

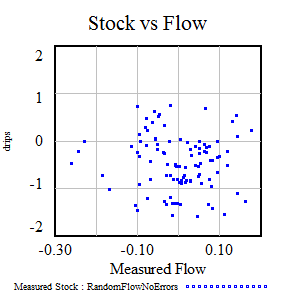

Once could actually draw some superstitious conclusions about the stock and flow time series above by breaking them into apparent episodes, but that’s quite likely to mislead unless you’re thinking explicitly about the bathtub. Looking at a stock-flow scatter plot, it appears that there is no relationship:

Once could actually draw some superstitious conclusions about the stock and flow time series above by breaking them into apparent episodes, but that’s quite likely to mislead unless you’re thinking explicitly about the bathtub. Looking at a stock-flow scatter plot, it appears that there is no relationship:

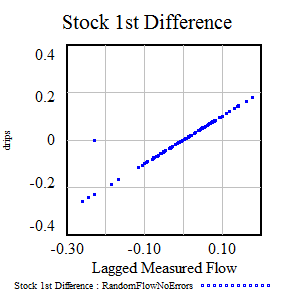

Of course, we know this is wrong because we built the model with perfect Flow->Stock causality. The usual statistical trick to reveal the relationship is to undo the integration by taking the first difference of the stock data. When you do that, plotting the change in the stock vs. the flow (lagged one period to account for the differencing), the relationship reappears:

Unfortunately, this is not a very robust procedure. Derivatives amplify noise, and it’s easy for other things to go wrong, like getting the reporting lags misaligned. Adding driving noise and measurement errors to the last experiment, we can see how the observed slope of the relationship disappears into the mist:

Lucky vs. unlucky random draws, with relationships partially to completely obscured by noise.

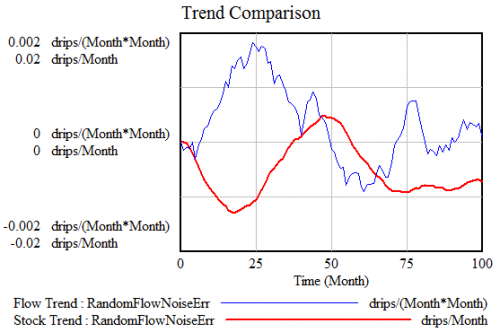

In any case, comparison of trends of the Stock and Flow is not informative. In effect, a trend comparison is taking the derivatives of both sides of the equation, so it doesn’t undo the integration – it just shifts it to another level and amplifies noise. Here’s a comparison of trend estimates (from a second order smooth) from the noisy experiment above:

It actually doesn’t matter what smoothing or regression algorithm you use to estimate the trends, because no amount of manipulation can make up for the fact that you’re ignoring bathtub dynamics.

In cases where noise defeats differencing, it’s still possible to get some information out of the experiments above by getting more sophisticated (estimating a dynamic model, with Kalman filtering), but for that you have to be fully immersed in the bathtub.

4 thoughts on “Bathtub Statistics”