The scoreboard widget just hit 1000 installs and 90,000 views. Janice Molloy has a nice perspective on the use of feedback to change the world, on Leverage Points.

Author: Tom

Danish text – emissions trajectories

Surfing a bit, it looks like the furor over the leaked Danish text actually has at least four major components:

- Lack of per capita convergence in 2050.

- Requirement that the upper tier of developing countries set targets.

- Institutional arrangements that determine control of funds and activity.

- The global peak in 2020 and decline to 2050.

These are evident in coverage in Politico and COP15.dk, for example.

I’ve already tackled #1, which is an illusion based on flawed analysis. I also commented on #2 – whether you set formal targets or not, absolute emissions need to fall in every major region if atmospheric GHG concentrations and radiative imbalance are to stabilize or decline. I don’t have an opinion on #3. #4 is what I’d like to talk about here.

There seem to be two responses to #4: dissatisfaction with the very idea of peaking and declining on anything like that schedule, and dissatisfaction with the burden sharing at the peak.

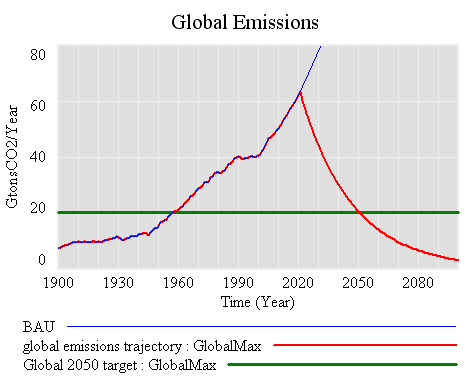

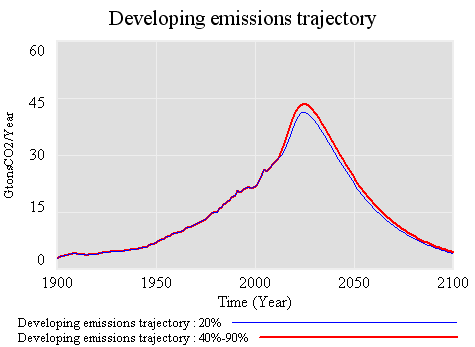

First, the global trajectory. There are a variety of possible emissions paths that satisfy the criteria in the Danish text. Here’s one (again relying on C-ROADS data and projections, close to A1FI in the future):

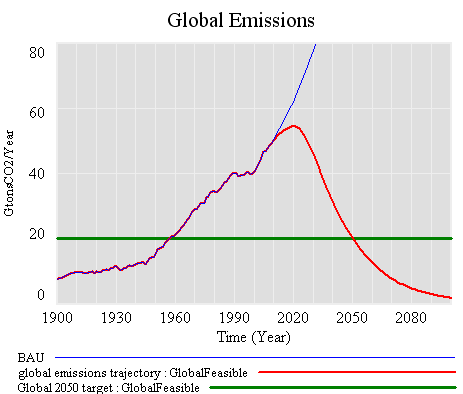

Here, emissions grow along Business-As-Usual (BAU), peak in 2020, then decline at 3.75%/year to hit the 2050 target. This is, of course, a silly trajectory, because its basically impossible to turn the economy on a dime like this, transitioning overnight from growth to decline. More plausible trajectories have to be smooth, like this:

One consequence of the smoothness constraint is that emissions reductions have to be faster later, to make up for the growth that occurs during a gradual transition from growing to declining emissions. Here, they approach -5%/year, vs. -3.5% in the “pointy” trajectory. A similar constraint arises from the need to maintain the area under the emissions curve if you want to achieve similar climate outcomes.

So, anyone who argues for a later peak is in effect arguing for (a) faster reductions later, or (b) weaker ambitions for the climate outcome. I don’t see how either of these is in the best interest of developing countries (or developed, for that matter). (A) means spending at most an extra decade building carbon-intensive capital, only to abandon it at a rapid rate a decade or two later. That’s not development. (B) means more climate damages and other negative externalities, for which growth is unlikely to compensate, and to which the developing countries are probably most vulnerable.

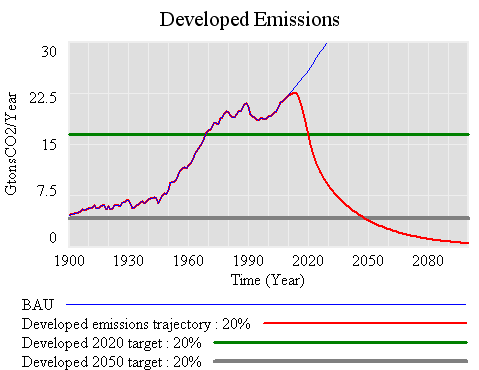

If, for sake of argument, we take my smooth version of the Danish text target as a given, there’s still the question of how emissions between now and 2050 are partitioned. If the developed world shoots for 20% below 1990 by 2020, the trajectory might look like this:

That’s a target that most would consider to be modest, yet to hit it, emissions have to peak by 2012, and decline at up to 8% per year through 2020. It would be easier to achieve in some regions, like the EU, that are already not far from Kyoto levels, but tough for the developed world as a whole. The natural turnover of buildings, infrastructure and power plants is 2 to 4%/year, so emissions declining at 8% per year means some combination of massive retrofits, where possible, and abandonment of carbon-emitting assets. If that were happening in the developed world, in tandem with free trade, rapid growth and no targets in the developing world, it would surely mean massive job dislocations. I expect that would cause the emission control regime in the developed world to crumble.

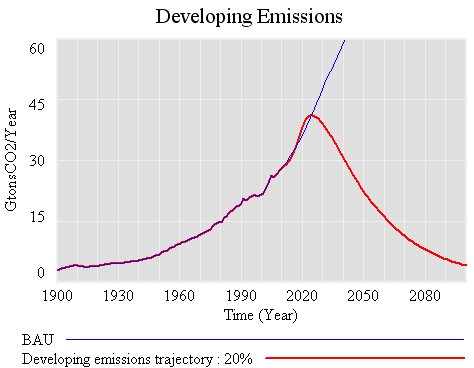

The -20% in 2020 trajectory creates surprisingly little headroom for growth in the developing world. Emissions can grow only until 2025 (vs. 2020 globally, and 2012 in the developing countries). Thereafter they have to fall at roughly the global rate.

What if the developed world manages to go even faster, achieving -40% from 1990 by 2020, and -90% by 2050? Surprisingly, that buys the developing countries almost nothing. Emissions can rise a little higher, but must peak around the same time, and decline at the same rate. Some of the rise is potentially inaccessible, because it exceeds BAU, and no one really knows how to control economic growth.

The reason for this behavior is fairly simple: as soon as developed countries make substantial cuts of any size, they become bit players in the global emissions game. Further reductions, acting on a small basis, are very tiny compared to the large basis of developing country emissions. Thus the past is a story of high developed country cumulative emissions, but the future is really about the developing countries’ path. If developing countries want to push the emissions envelope, they have to accept that they are taking a riskier climate future, no matter what the developed world does. In addition, deeper cuts on the part of the developed world become very expensive, because marginal costs grow with the depth of the cut. On the whole everyone would be better off if the developing countries asked for more money rather than deeper emissions cuts in the developed world.

There’s a deeper reason to think that calls for deeper cuts in the developed world, to provide headroom for greater emissions growth in developing countries, are counterproductive. I think the mental model behind such calls is a mixture of the following:

- carbon fuels GDP, and GDP equals happiness

- developing countries have an urgent need to build certain critical infrastructure, after which they can reduce emissions

- slow growth fuels discontent, leading to revolution

- growth pays for pollution reductions (the Kuznets curve)

Certainly each of these ideas contains some truth, and each has played a role in the phenomenal growth of the developed world. However, each is also fallacious in important ways. The elements in (1) are certainly correlated, but the relationship between carbon and happiness is mediated by all kinds of institutional and social factors. The key question for (2) is, what kind of infrastructure? Cheap carbon invites building exactly the kind of infrastructure that the developed world is now locked into, and struggling to dismantle. (3) might be true, but I suspect that it has as more to do with inequitable distribution of wealth and power than it does with absolute wealth. Rapid growth can easily increase inequity. The empirical basis of the Kuznets curve in (4) is rather shaky. Attempts to grow out of the negative side effects of growth are essentially a pyramid scheme. Moreover, to the extent that growth is successful, preferences shift to nonmarket amenities like health and clean air, so the cost of the negatives of growth grows.

Rather than following the developed countries down a blind alley, the developing countries could take this opportunity to bypass our unsustainable development path. By implementing emissions controls now, they could start building a low-carbon-friendly infrastructure, and get locked into sustainable technologies, that won’t have to be dismantled or abandoned in a few decades. This would mean developing institutions that internalize environmental externalities and allocate property rights sensibly. As a byproduct, that would help the poorest within their countries avoid the consequences of the new wealth of their elites. Preferences would evolve toward low-carbon lifestyles, rather than shopping, driving, and conspicuous consumption. Now, if we could just figure out how to implement the same insights here in the developed world …

Danish text analysis

In response to a couple of requests for details, I’ve attached a spreadsheet containing my numbers from the last post on the Danish text: Danish text analysis v3 (updated).

Here it is in words, with a little rounding to simplify:

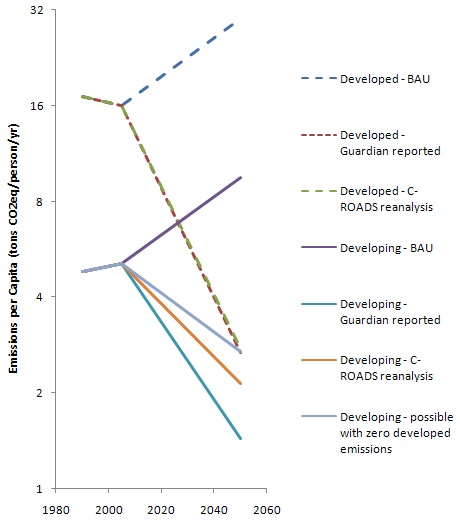

In 1990, global emissions were about 40 GtCO2eq/year, split equally between developed and developing countries. Due to population disparities, developed emissions per capita were 3.5 times bigger than developing at that point.

The Danish text sets a global target of 50% of 1990 emissions in 2050, which means that the global target is 20 GtCO2eq/year. It also sets a target of 80% (or more) below 1990 for the developed countries, which means their target is 4 GtCO2eq/year. That leaves 16 GtCO2eq/year for the developing world.

According to the “confidential analysis of the text by developing countries” cited in the Guardian, developed countries are emitting 2.7 tonsCO2eq/person/year in 2050, while developing countries emit about half as much: 1.4 tonsCO2eq/person/year. For the developed countries, that’s in line with what I calculate using C-ROADS data and projections. For the developing countries, to get 16 gigatons per year emissions at 1.4 tons per cap, you need 11 billion people emitting. That’s an addition of 6 billion people between 2005 and 2050, implying a growth rate above recent history and way above UN projections.

If you redo the analysis with a more plausible population forecast, per capita emissions convergence is nearly achieved, with developing country emissions per capita within about 25% of developed.

Note log scale to emphasize proportional differences.

The "Danish text" – bad numbers, missed opportunity?

The blogosphere is filled with reports, originating in the Guardian, of developing countries’ fury over the leaked “Danish text” – a strawdog draft agreement.

The UN Copenhagen climate talks are in disarray today after developing countries reacted furiously to leaked documents that show world leaders will next week be asked to sign an agreement that hands more power to rich countries and sidelines the UN’s role in all future climate change negotiations.

The angry reaction strikes me as a missed opportunity. But more importantly, I think it’s a product of bad analysis, with an inflated population projection for the developing world creating a false impression of failure to achieve emissions convergence.

Here’s what I think is the essence of the text with respect to emissions trajectories:

3. Recalling the ultimate objective of the Convention, the Parties stress the urgency of action on both mitigation and adaptation and recognize the scientific view that the increase in global average temperature above pre-industrial levels ought not to exceed 2 degrees C. In this regard, the Parties:

- Support the goal of a peak of global emissions as soon as possible, but no later than [2020], acknowledging that developed countries collectively have peaked and that the timeframe for peaking will be longer in developing countries,

- Support the goal of a reduction of global annual emissions in 2050 by at least 50 percent versus 1990 annual emissions, equivalent to at least 58 percent versus 2005 annual emissions. The Parties contributions towards the goal should take into account common but different responsibility and respective capabilities and a long term convergence of per capita emissions.

7. The developed country Parties commit to individual national economy wide targets for 2020. The targets in Attachment A would expect to yield aggregate emissions reductions by X1 percent by 2020 versus 1990 (X2 percent vs. 2005). The purchase of international offset credits will play a supplementary role to domestic action. The developed country Parties support a goal to reduce their emissions of greenhouse gases in aggregate by 80% or more by 2050 versus 1990 (X3 percent versus 2005).

9. The developing country Parties, except the least developed countries which may contribute at their own discretion, commit to nationally appropriate mitigation actions, including actions supported and enabled by technology, financing and capacity-building. The developing countries’ individual mitigation action could in aggregate yield a [Y percent] deviation in [2020] from business as usual and yielding their collective emissions peak before [20XX] and decline thereafter.

These provisions are evidently the source of much of the outrage. Again from the Guardian:

A confidential analysis of the text by developing countries also seen by the Guardian shows deep unease over details of the text. In particular, it is understood to:

• Force developing countries to agree to specific emission cuts and measures that were not part of the original UN agreement;

…

• Not allow poor countries to emit more than 1.44 tonnes of carbon per person by 2050, while allowing rich countries to emit 2.67 tonnes.

I have limited sympathy with the first point. If the world is to avoid the probability of serious climate change, emissions have to fall below natural uptake. That can’t happen if the developed world is shrinking while developing country emissions grow exponentially, so everyone has to play. You can’t cheat mother nature. If we’re serious about mitigation, the conversation has to be about how to help the developing countries peak and reduce, not whether they will.

The last point, concerning per capita emissions disparities, is actually not stated anywhere in the draft. Notice that the article 9 commitment for developing countries are expressed as variables to be filled in. To figure out what’s going on, I ran the numbers myself. I get about the same answer for the developed countries: 2.75 TonCO2eq/person/year. When multiplied by 1.5 billion people, that’s about 4.1 gigatons per year, leaving a budget of about 15.9 for the developing world (half of 1990 emissions of about 20 GtCO2eq, less 4.1). Dividing that by 1.44 yields an expected population in the developing world of 11 billion. That’s a bonkers population forecast, 2 billion above the UN high variant projection. If it came true, it would likely be a disaster in itself. More importantly, the per capita emissions ratios would be expressing a strange notion of (in)equity: the nearly 6 billion people added from 2005-2050 in the developing world would be emitting more than twice as much as all the people in the developing world.

If you rerun the numbers with a more sensible population forecast, with about 7.4 billion people in the developing world, the numbers are 2.75 and 2.15 tonsCO2eq/person/year in the developed and developing countries, respectively. (UN mid variant population projection is 7.9 billion, but I’m sticking with C-ROADS data for convenience, which has slightly different regional definitions). In other words, convergence is at hand: the world has gone from 3.5:1 emissions per cap in 1990 to 1.28:1 – a rather stunning achievement, not something to get mad about. In addition, it’s not physically possible to do much better than that for the developing world, because the developed world represents a small slice of the global population in 2050. For example, even if the developed world could reach zero emissions in 2050, the remaining emissions budget would only permit emissions of 2.7 tonsCO2eq/person/year in the developing countries.

Even if the high population projection came true, I still think it would be a mistake for developing countries to get mad, because there’s article 20:

20. The Parties share the view that the strengthened financial architecture should be able to handle gradually scaled up international public support. International public finance support to developing countries [should/shall] reach the order of [X] billion USD in 2020 on the basis of appropriate increases in mitigation and adaptation efforts by developing countries.

Rather than asking for higher emissions, developing countries could ask for a bigger [X] to compensate for smaller per capita emissions. Then they’d have help moving towards a low-carbon economy, with all the cobenefits that entails. They wouldn’t have to follow the developed world down a fossil-fired, energy intensive path that’s ultimately a dead end. Maybe the real anger arises from the difficulty of getting meaningful financial terms, but that’s the conversation we should be having if we want a shot at 2C or below.

Check my math. If you think I’m right, spread the word. It would be a shame if the possibility of a climate agreement were derailed by a flawed analysis of a draft document. If you think I’m wrong, please comment, and I’ll take another look.

Update: I’ve published the numbers behind this in my next post.

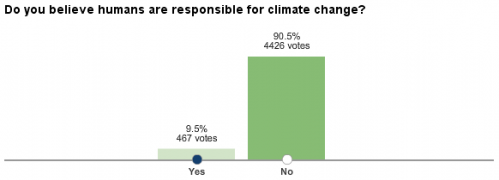

WSJ: Do you believe humans are responsible for climate change?

Snow & Eagles

Biofuel Indirection

A new paper in Science on biofuel indirect effects indicates significant emissions, and has an interesting perspective on how to treat them:

The CI of fuel was also calculated across three time periods [] so as to compare with displaced fossil energy in a LCFS and to identify the GHG allowances that would be required for biofuels in a cap-and-trade program. Previous CI estimates for California gasoline [] suggest that values less than ~96 g CO2eq MJ–1 indicate that blending cellulosic biofuels will help lower the carbon intensity of California fuel and therefore contribute to achieving the LCFS. Entries that are higher than 96 g CO2eq MJ–1 would raise the average California fuel carbon intensity and thus be at odds with the LCFS. Therefore, the CI values for case 1 are only favorable for biofuels if the integration period extends into the second half of the century. For case 2, the CI values turn favorable for biofuels over an integration period somewhere between 2030 and 2050. In both cases, the CO2 flux has approached zero by the end of the century when little or no further land conversion is occurring and emissions from decomposition are approximately balancing carbon added to the soil from unharvested components of the vegetation (roots). Although the carbon accounting ends up as a nearly net neutral effect, N2O emissions continue. Annual estimates start high, are variable from year to year because they depend on climate, and generally decline over time.

Variable Case 1 Case 2

Time period 2000–2030 2000–2050 2000–2100 2000–2030 2000–2050 2000–2100 Direct land C 11 27 0 –52 –24 –7 Indirect land C 190 57 7 181 31 1 Fertilizer N2O 29 28 20 30 26 19 Total 229 112 26 158 32 13 One of the perplexing issues for policy analysts has been predicting the dynamics of the CI over different integration periods []. If one integrates over a long enough period, biofuels show a substantial greenhouse gas advantage, but over a short period they have a higher CI than fossil fuel []. Drawing on previous analyses [], we argue that a solution need not be complex and can avoid valuing climate damages by using the immediate (annual) emissions (direct and indirect) for the CI calculation. In other words, CI estimates should not integrate over multiple years but rather simply consider the fuel offset for the policy time period (normally a single year). This becomes evident in case 1. Despite the promise of eventual long-term economic benefits, a substantial penalty—in fact, possibly worse than with gasoline—in the first few decades may render the near-term cost of the carbon debt difficult to overcome in this case.

You can compare the carbon intensities in the table to the indirect emissions considered in California standards, at roughly 30 to 46 gCO2eq/MJ.

|

|

ReportsIndirect Emissions from Biofuels: How Important?Jerry M. Melillo,1,* John M. Reilly,2 David W. Kicklighter,1 Angelo C. Gurgel,2,3 Timothy W. Cronin,1,2 Sergey Paltsev,2 Benjamin S. Felzer,1,4 Xiaodong Wang,2,5 Andrei P. Sokolov,2 C. Adam Schlosser2 A global biofuels program will lead to intense pressures on land supply and can increase greenhouse gas emissions from land-use changes. Using linked economic and terrestrial biogeochemistry models, we examined direct and indirect effects of possible land-use changes from an expanded global cellulosic bioenergy program on greenhouse gas emissions over the 21st century. Our model predicts that indirect land use will be responsible for substantially more carbon loss (up to twice as much) than direct land use; however, because of predicted increases in fertilizer use, nitrous oxide emissions will be more important than carbon losses themselves in terms of warming potential. A global greenhouse gas emissions policy that protects forests and encourages best practices for nitrogen fertilizer use can dramatically reduce emissions associated with biofuels production.

1 The Ecosystems Center, Marine Biological Laboratory (MBL), 7 MBL Street, Woods Hole, MA 02543, USA. * To whom correspondence should be addressed. E-mail: jmelillo@mbl.edu Expanded use of bioenergy causes land-use changes and increases in terrestrial carbon emissions (1, 2). The recognition of this has led to efforts to determine the credit toward meeting low carbon fuel standards (LCFS) for different forms of bioenergy with an accounting of direct land-use emissions as well as emissions from land use indirectly related to bioenergy production (3, 4). Indirect emissions occur when biofuels production on agricultural land displaces agricultural production and causes additional land-use change that leads to an increase in net greenhouse gas (GHG) emissions (2, 4). The control of GHGs through a cap-and-trade or tax policy, if extended to include emissions (or credits for uptake) from land-use change combined with monitoring of carbon stored in vegetation and soils and enforcement of such policies, would eliminate the need for such life-cycle accounting (5, 6). There are a variety of concerns (5) about the practicality of including land-use change emissions in a system designed to reduce emissions from fossil fuels, and that may explain why there are no concrete proposals in major countries to do so. In this situation, fossil energy control programs (LCFS or carbon taxes) must determine how to treat the direct and indirect GHG emissions associated with the carbon intensity of biofuels. The methods to estimate indirect emissions remain controversial. Quantitative analyses to date have ignored these emissions (1), considered those associated with crop displacement from a limited area (2), confounded these emissions with direct or general land-use emissions (6–8), or developed estimates in a static framework of today’s economy (3). Missing in these analyses is how to address the full dynamic accounting of biofuel carbon intensity (CI), which is defined for energy as the GHG emissions per megajoule of energy produced (9), that is, the simultaneous consideration of the potential of net carbon uptake through enhanced management of poor or degraded lands, nitrous oxide (N2O) emissions that would accompany increased use of fertilizer, environmental effects on terrestrial carbon storage [such as climate change, enhanced carbon dioxide (CO2) concentrations, and ozone pollution], and consideration of the economics of land conversion. The estimation of emissions related to global land-use change, both those on land devoted to biofuel crops (direct emissions) and those indirect changes driven by increased demand for land for biofuel crops (indirect emissions), requires an approach to attribute effects to separate land uses. We applied an existing global modeling system that integrates land-use change as driven by multiple demands for land and that includes dynamic greenhouse gas accounting (10, 11). Our modeling system, which consists of a computable general equilibrium (CGE) model of the world economy (10, 12) combined with a process-based terrestrial biogeochemistry model (13, 14), was used to generate global land-use scenarios and explore some of the environmental consequences of an expanded global cellulosic biofuels program over the 21st century. The biofuels scenarios we focus on are linked to a global climate policy to control GHG emissions from industrial and fossil fuel sources that would, absent feedbacks from land-use change, stabilize the atmosphere’s CO2 concentration at 550 parts per million by volume (ppmv) (15). The climate policy makes the use of fossil fuels more expensive, speeds up the introduction of biofuels, and ultimately increases the size of the biofuel industry, with additional effects on land use, land prices, and food and forestry production and prices (16). We considered two cases in order to explore future land-use scenarios: Case 1 allows the conversion of natural areas to meet increased demand for land, as long as the conversion is profitable; case 2 is driven by more intense use of existing managed land. To identify the total effects of biofuels, each of the above cases is compared with a scenario in which expanded biofuel use does not occur (16). In the scenarios with increased biofuels production, the direct effects (such as changes in carbon storage and N2O emissions) are estimated only in areas devoted to biofuels. Indirect effects are defined as the differences between the total effects and the direct effects. At the beginning of the 21st century, ~31.5% of the total land area (133 million km2) was in agriculture: 12.1% (16.1 million km2) in crops and 19.4% (25.8 million km2) in pasture (17). In both cases of increased biofuels use, land devoted to biofuels becomes greater than all area currently devoted to crops by the end of the 21st century, but in case 2 less forest land is converted (Fig. 1). Changes in net land fluxes are also associated with how land is allocated for biofuels production (Fig. 2). In case 1, there is a larger loss of carbon than in case 2, especially at mid-century. Indirect land use is responsible for substantially greater carbon losses than direct land use in both cases during the first half of the century. In both cases, there is carbon accumulation in the latter part of the century. The estimates include CO2 from burning and decay of vegetation and slower release of carbon as CO2 from disturbed soils. The estimates also take into account reduced carbon sequestration capacity of the cleared areas, including that which would have been stimulated by increased ambient CO2 levels. Smaller losses in the early years in case 2 are due to less deforestation and more use of pasture, shrubland, and savanna, which have lower carbon stocks than forests and, once under more intensive management, accumulate soil carbon. Much of the soil carbon accumulation is projected to occur in sub-Saharan Africa, an attractive area for growing biofuels in our economic analyses because the land is relatively inexpensive (10) and simple management interventions such as fertilizer additions can dramatically increase crop productivity (18).

Estimates of land devoted to biofuels in our two scenarios (15 to 16%) are well below the estimate of We also simulated the emissions of N2O from additional fertilizer that would be required to grow biofuel crops. Over the century, the N2O emissions become larger in CO2 equivalent (CO2eq) than carbon emissions from land use (Fig. 3). The net GHG effect of biofuels also changes over time; for case 1, the net GHG balance is –90 Pg CO2eq through 2050 (a negative sign indicates a source; a positive sign indicates a sink), whereas it is +579 through 2100. For case 2, the net GHG balance is +57 Pg CO2eq through 2050 and +679 through 2100. We estimate that by the year 2100, biofuels production accounts for about 60% of the total annual N2O emissions from fertilizer application in both cases, where the total for case 1 is 18.6 Tg N yr–1 and for case 2 is 16.1 Tg N yr–1. These total annual land-use N2O emissions are about 2.5 to 3.5 times higher than comparable estimates from an earlier study (8). Our larger estimates result from differences in the assumed proportion of nitrogen fertilizer lost as N2O (21) as well as differences in the amount of land devoted to food and biofuel production. Best practices for the use of nitrogen fertilizer, such as synchronizing fertilizer application with plant demand (22), can reduce N2O emissions associated with biofuels production.

The CI of fuel was also calculated across three time periods (Table 1) so as to compare with displaced fossil energy in a LCFS and to identify the GHG allowances that would be required for biofuels in a cap-and-trade program. Previous CI estimates for California gasoline (3) suggest that values less than ~96 g CO2eq MJ–1 indicate that blending cellulosic biofuels will help lower the carbon intensity of California fuel and therefore contribute to achieving the LCFS. Entries that are higher than 96 g CO2eq MJ–1 would raise the average California fuel carbon intensity and thus be at odds with the LCFS. Therefore, the CI values for case 1 are only favorable for biofuels if the integration period extends into the second half of the century. For case 2, the CI values turn favorable for biofuels over an integration period somewhere between 2030 and 2050. In both cases, the CO2 flux has approached zero by the end of the century when little or no further land conversion is occurring and emissions from decomposition are approximately balancing carbon added to the soil from unharvested components of the vegetation (roots). Although the carbon accounting ends up as a nearly net neutral effect, N2O emissions continue. Annual estimates start high, are variable from year to year because they depend on climate, and generally decline over time. |

Snowy path

Tracking climate initiatives

The launch of Climate Interactive’s scoreboard widget has been a hit – 10,500 views and 259 installs on the first day. Be sure to check out the video.

It’s a lot of work to get your arms around the diverse data on country targets that lies beneath the widget. Sometimes commitments are hard to translate into hard numbers because they’re just vague, omit key data like reference years, or are expressed in terms (like a carbon price) that can’t be translated into quantities with certainty. CI’s data is here.

There are some other noteworthy efforts:

- The Climate Analytics/Ecofys/PIK climateactiontracker

- Pew Climate tracks international, US federal and state and local initiatives

- Environment California has just launched an interactive map detailing state initiatives

- Terry Tamminen has a state climate policy tracker

- Last year, I took a look at state climate commitments and regional climate initiatives, with an eye for their use of models (see parts I, II, III)

- The Carbon Disclosure Project reports on thousands of companies

- DB reports on countries from an investor’s perspective

Update: one more from WRI

Update II: another from the UN

Fit to data, good or evil?

The following is another extended excerpt from Jim Thompson and Jim Hines’ work on financial guarantee programs. The motivation was a client request for comparison of modeling results to data. The report pushes back a little, explaining some important limitations of model-data comparisons (though it ultimately also fulfills the request). I have a slightly different perspective, which I’ll try to indicate with some comments, but on the whole I find this to be an insightful and provocative essay.

First and Foremost, we do not want to give credence to the erroneous belief that good models match historical time series and bad models don’t. Second, we do not want to over-emphasize the importance of modeling to the process which we have undertaken, nor to imply that modeling is an end-product.

In this report we indicate why a good match between simulated and historical time series is not always important or interesting and how it can be misleading Note we are talking about comparing model output and historical time series. We do not address the separate issue of the use of data in creating computer model. In fact, we made heavy use of data in constructing our model and interpreting the output — including first hand experience, interviews, written descriptions, and time series.

This is a key point. Models that don’t report fit to data are often accused of not using any. In fact, fit to numerical data is only one of a number of tests of model quality that can be performed. Alone, it’s rather weak. In a consulting engagement, I once ran across a marketing science model that yielded a spectacular fit of sales volume against data, given advertising, price, holidays, and other inputs – R^2 of .95 or so. It turns out that the model was a linear regression, with a “seasonality” parameter for every week. Because there were only 3 years of data, those 52 parameters were largely responsible for the good fit (R^2 fell to < .7 if they were omitted). The underlying model was a linear regression that failed all kinds of reality checks.

50% in a recent analysis (

50% in a recent analysis (