Roger Pielke Jr. poses a carbon price paradox:

The carbon price paradox is that any politically conceivable price on carbon can do little more than have a marginal effect on the modern energy economy. A price that would be high enough to induce transformational change is just not in the cards. Thus, carbon pricing alone cannot lead to a transformation of the energy economy.

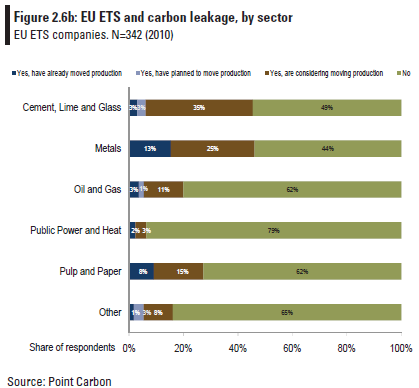

Advocates for a response to climate change based on increasing the costs of carbon-based energy skate around the fact that people react very negatively to higher prices by promising that action won’t really cost that much. … If action on climate change is indeed “not costly” then it would logically follow the only reasons for anyone to question a strategy based on increasing the costs of energy are complete ignorance and/or a crass willingness to destroy the planet for private gain. … There is another view. Specifically that the current ranges of actions at the forefront of the climate debate focused on putting a price on carbon in order to motivate action are misguided and cannot succeed. This argument goes as follows: In order for action to occur costs must be significant enough to change incentives and thus behavior. Without the sugarcoating, pricing carbon (whether via cap-and-trade or a direct tax) is designed to be costly. In this basic principle lies the seed of failure. Policy makers will do (and have done) everything they can to avoid imposing higher costs of energy on their constituents via dodgy offsets, overly generous allowances, safety valves, hot air, and whatever other gimmick they can come up with.

His prescription (and that of the Breakthrough Institute) is low carbon taxes, reinvested in R&D:

We believe that soon-to-be-president Obama’s proposal to spend $150 billion over the next 10 years on developing carbon-free energy technologies and infrastructure is the right first step. … a $5 charge on each ton of carbon dioxide produced in the use of fossil fuel energy would raise $30 billion a year. This is more than enough to finance the Obama plan twice over.

… We would like to create the conditions for a virtuous cycle, whereby a small, politically acceptable charge for the use of carbon emitting energy, is used to invest immediately in the development and subsequent deployment of technologies that will accelerate the decarbonization of the U.S. economy.

…

Stop talking, start solving

As the nation begins to rely less and less on fossil fuels, the political atmosphere will be more favorable to gradually raising the charge on carbon, as it will have less of an impact on businesses and consumers, this in turn will ensure that there is a steady, perhaps even growing source of funds to support a process of continuous technological innovation.

This approach reminds me of an old joke:

Lenin, Stalin, Khrushchev and Brezhnev are travelling together on a train. Unexpectedly the train stops. Lenin suggests: “Perhaps, we should call a subbotnik, so that workers and peasants fix the problem.” Kruschev suggests rehabilitating the engineers, and leaves for a while, but nothing happens. Stalin, fed up, steps out to intervene. Rifle shots are heard, but when he returns there is still no motion. Brezhnev reaches over, pulls the curtain, and says, “Comrades, let’s pretend we’re moving.”

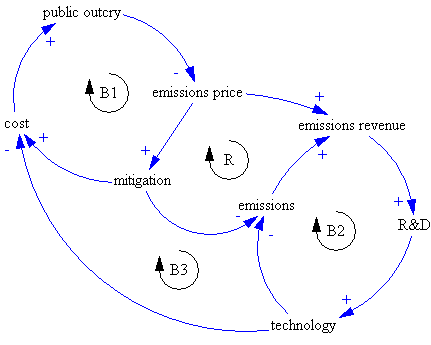

I translate the structure of Pielke’s argument like this:

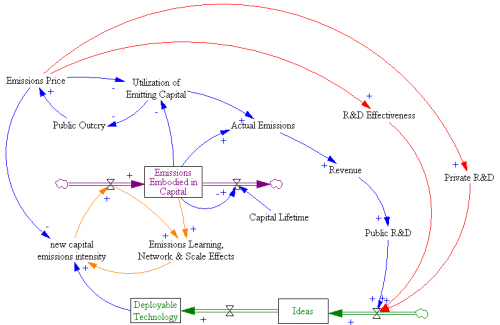

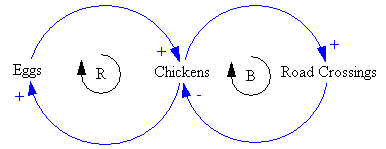

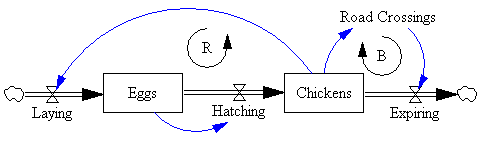

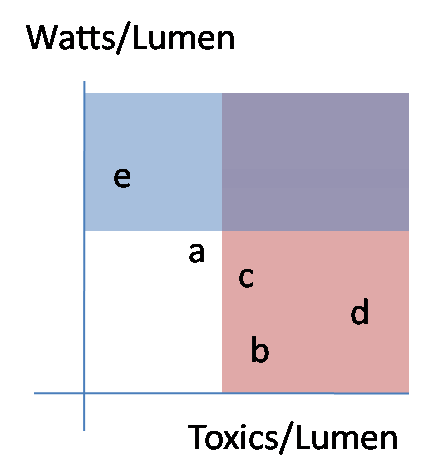

Implementation of a high emissions price now would be undone politically (B1). A low emissions price triggers a virtuous cycle (R), as revenue reinvested in technology lowers the cost of future mitigation, minimizing public outcry and enabling the emissions price to go up. Note that this structure implies two other balancing loops (B2 & B3) that serve to weaken the R&D effect, because revenues fall as emissions fall.

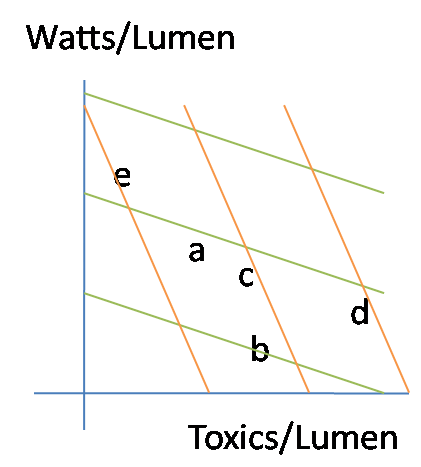

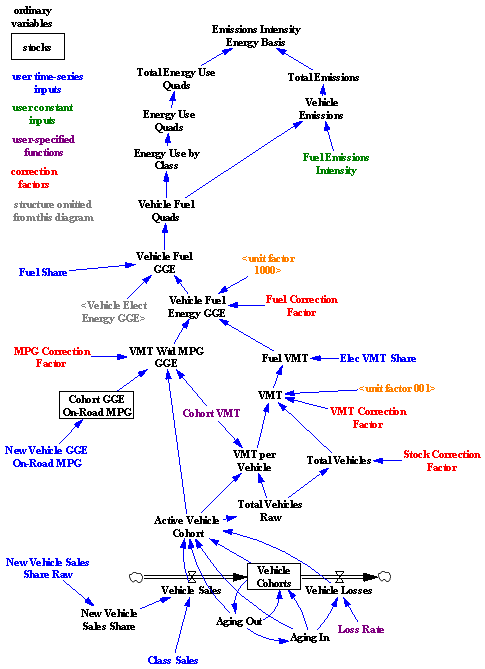

If you elaborate on the diagram a bit, you can see why the technology-led strategy is unlikely to work:

First, there’s a huge delay between R&D investment and emergence of deployable technology (green stock-flow chain). R&D funded now by an emissions price could take decades to emerge. Second, there’s another huge delay from the slow turnover of the existing capital stock (purple) – even if we had cars that ran on water tomorrow, it would take 15 years or more to turn over the fleet. Buildings and infrastructure last much longer. Together, those delays greatly weaken the near-term effect of R&D on emissions, and therefore also prevent the virtuous cycle of reduced public outcry due to greater opportunities from getting going. As long as emissions prices remain low, the accumulation of commitments to high-emissions capital grows, increasing public resistance to a later change in direction. Continue reading “Stop talking, start studying?”