I’ve always thought Burt Rutan was pretty cool, so I was rather disappointed when he signed on to a shady climate op-ed in the WSJ (along with Scott Armstrong). I was curious what Rutan’s mental model was, so I googled and found his arguments summarized in an extensive slide deck, available here.

It would probably take me 98 posts to detail the problems with these 98 slides, so I’ll just summarize a few that are particularly noteworthy from the perspective of learning about complex systems.

Data Quality

Rutan claims to be motivated by data fraud,

In my background of 46 years in aerospace flight testing and design I have seen many examples of data presentation fraud. That is what prompted my interest in seeing how the scientists have processed the climate data, presented it and promoted their theories to policy makers and the media. (here)

This is ironic, because he credulously relies on much fraudulent data. For example, slide 18 attempts to show that CO2 concentrations were actually much higher in the 19th century. But that’s bogus, because many of those measurements were from urban areas or otherwise subject to large measurement errors and bias. You can reject many of the data points on first principles, because they imply physically impossible carbon fluxes (500 billion tons in one year).

Slides 32-34 also present some rather grossly distorted comparisons of data and projections, complete with attributions of temperature cycles that appear to bear no relationship to the data (Slide 33, right figure, red line).

Slides 50+ discuss the urban heat island effect and surfacestations.org effort. Somehow they neglect to mention that the outcome of all of that was a cool bias in the data, not a warm bias.

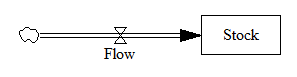

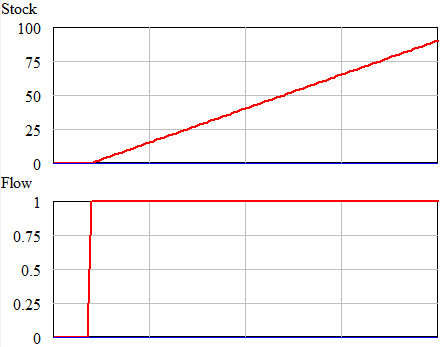

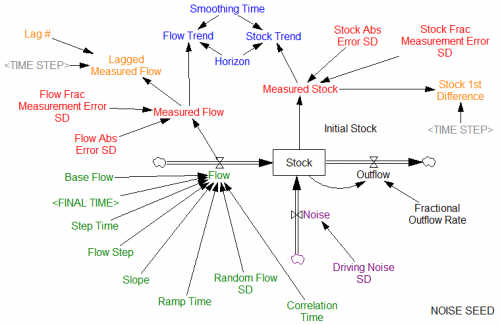

Bathtub Dynamics

Slides 27 and 28 seek a correlation between the CO2 and temperature time series. Failure is considered evidence that temperature is not significantly influenced by CO2. But this is a basic failure to appreciate bathtub dynamics. Temperature is an indicator of the accumulation of heat. Heat integrates radiative flux, which depends on GHG concentrations. So, even in a perfect system where CO2 is the only causal influence on temperature, we would not expect to see matching temporal trends in emissions, concentrations, and temperatures. How do you escape engineering school and design airplanes without knowing about integration?

Correlation and causation

Slide 28 also engages in the fallacy of the single cause and denying the antecedent. It proposes that, because warming rates were roughly the same from 1915-1945 and 1970-2000, while CO2 concentrations varied, CO2 cannot be the cause of the observations. This of course presumes (falsely) that CO2 is the only influence on temperatures, neglecting volcanoes, endogenous natural variability, etc., not to mention blatantly cherry-picking arbitrary intervals.

Slide 14 shows another misattribution of single cause, comparing CO2 and temperature over 600 million years, ignoring little things like changes in the configuration of the continents and output of the sun over that long period.

In spite of the fact that Rutan generally argues against correlation as evidence for causation, Slide 46 presents correlations between orbital factors and sunspots (the latter smoothed in some arbitrary way) as evidence that these factors do drive temperature.

Feedback

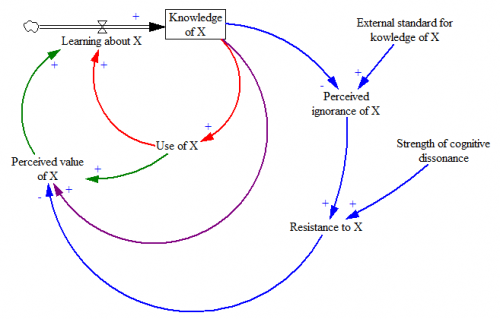

Slide 29 shows temperature leading CO2 in ice core records, concluding that temperature must drive CO2, and not the reverse. In reality, temperature and CO2 drive one another in a feedback loop. That turning points in temperature sometimes lead turning points in CO2 does not preclude CO2 from acting as an amplifier of temperature changes. (Recently there has been a little progress on this point.)

Too small to matter

Slide 12 indicates that CO2 concentrations are too small to make a difference, which has no physical basis, other than the general misconception that small numbers don’t matter.

Computer models are not evidence

So Rutan claims on slide 47. Of course this is true in a trivial sense, because one can always build arbitrary models that bear no relationship to anything.

But why single out computer models? Mental models and pencil-and-paper calculations are not uniquely privileged. They are just as likely to fail to conform to data, laws of physics, and rules of logic as a computer model. In fact, because they’re not stated formally, testable automatically, or easily shared and critiqued, they’re more likely to contain some flaws, particularly mathematical ones. The more complex a problem becomes, the more the balance tips in favor of formal (computer) models, particularly in non-experimental sciences where trial-and-error is not practical.

There’s also no such thing as model-free inference. Rutan presents many of his charts as if data speaks for itself. In fact, no measurements can be taken without a model of the underlying process to be measured (in a thermometer, the thermal expansion of a fluid). More importantly, event the simplest trend calculation or comparison of time series implies a model. Leaving that model unstated just makes it easier to engage in bathtub fallacies and other errors in reasoning.

The bottom line

The problem here is that Rutan has no computer model. So, he feels free to assemble a dog’s breakfast of data, sourced from illustrious scientific institutions like the Heritage Foundation (slide 12), and call it evidence. Because he skips the exercise of trying to put everything into a rigorous formal feedback model, he’s received no warning signs that he has strayed far from reality.

I find this all rather disheartening. Clearly it is easy for a smart, technical person to be wildly incompetent outside his original field of expertise. But worse, it’s even easy for them to assemble a portfolio of pseudoscience that looks like evidence, capitalizing on past achievements to sucker a loyal following.

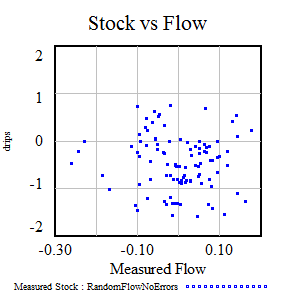

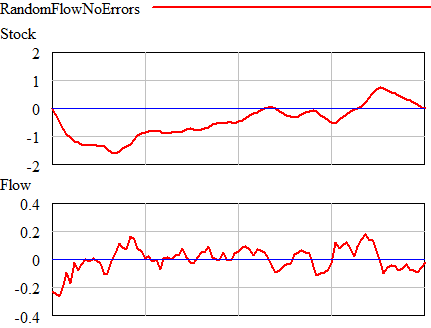

Once could actually draw some superstitious conclusions about the stock and flow time series above by breaking them into apparent episodes, but that’s quite likely to mislead unless you’re thinking explicitly about the bathtub. Looking at a stock-flow scatter plot, it appears that there is no relationship:

Once could actually draw some superstitious conclusions about the stock and flow time series above by breaking them into apparent episodes, but that’s quite likely to mislead unless you’re thinking explicitly about the bathtub. Looking at a stock-flow scatter plot, it appears that there is no relationship: